I’ve been building MinimalChat for a while now, and based on the feedback I’ve received, it’s in a pretty decent place for general use. I figured I’d share it here for anyone who might be interested!

Quick Features Overview:

- Mobile PWA Support: Install the site like a normal app on any device.

- Any OpenAI formatted API support: Works with LM Studio, OpenRouter, etc.

- Local Storage: All data is stored locally in the browser with minimal setup. Just enter a port and go in Docker.

- Experimental Conversational Mode (GPT Models for now)

- Basic File Upload and Storage Support: Files are stored locally in the browser.

- Vision Support with Maintained Context

- Regen/Edit Previous User Messages

- Swap Models Anytime: Maintain conversational context while switching models.

- Set/Save System Prompts: Set the system prompt. Prompts will also be saved to a list so they can be switched between easily.

The idea is to make it essentially foolproof to deploy or set up while being generally full-featured and aesthetically pleasing. No additional databases or servers are needed, everything is contained and managed inside the web app itself locally.

It’s another chat client in a sea of clients but it is unique in its own ways in my opinion. Enjoy! Feedback is always appreciated!

Self Hosting Wiki Section https://github.com/fingerthief/minimal-chat/wiki/Self-Hosting-With-Docker

Will it work with Ollama?

I haven’t personally tried it yet with Ollama but it should work since it looks like Ollama has the ability to use OpenAI Response Formatted API https://github.com/ollama/ollama/blob/main/docs/openai.md

I might give it go here in a bit to test and confirm.

Sounds interesting! May I ask where the data used to train came from?

This app is more of an interface to use while connecting to any number of LLM Models that have an API available. The application itself has no model.

For example you can choose to use GPT-4 Omni by providing an API key from OpenAI.

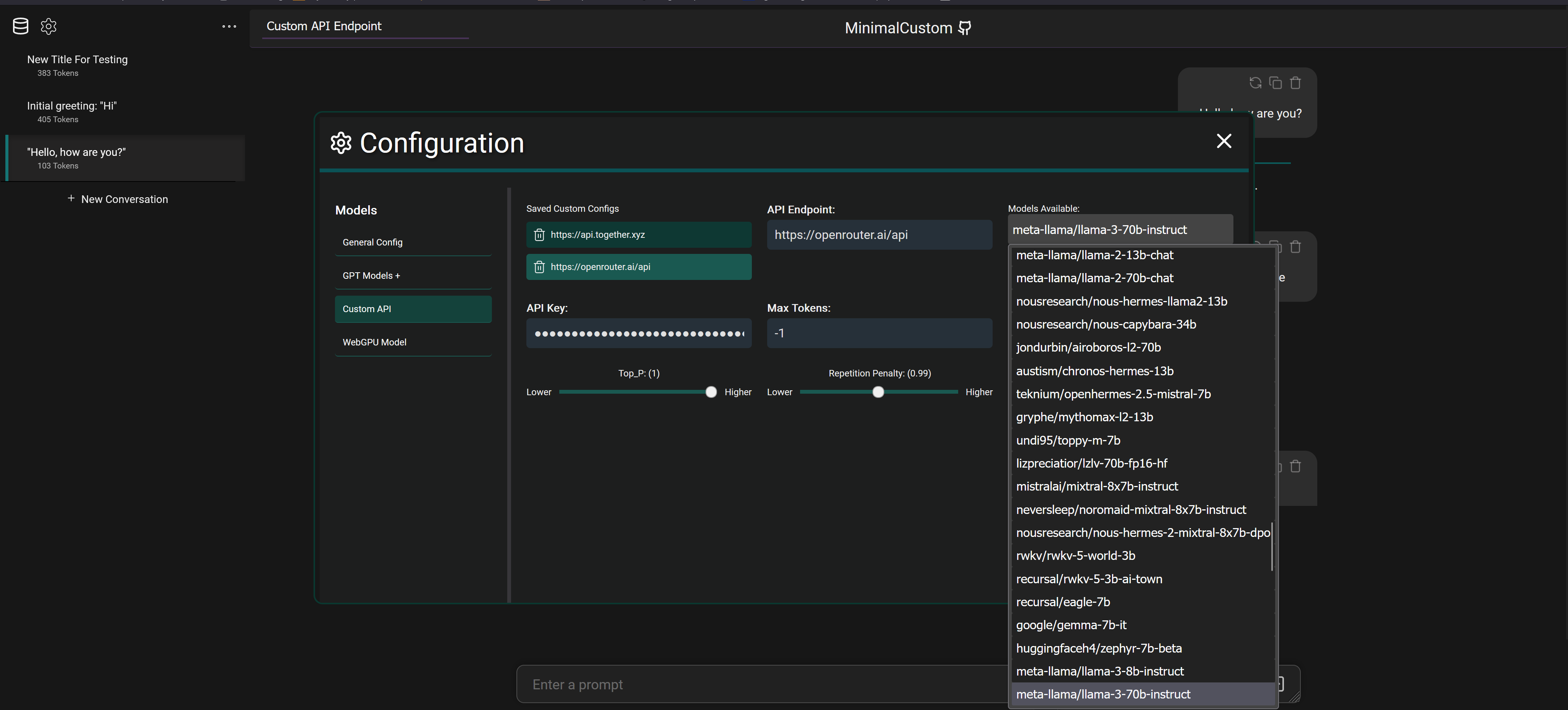

But you can also connect to services like OpenRouter with an API key and select between 20+ different models that they provide access to as seen below

It also supports connecting to fully local models via programs like LM Studio which downloads models from Hugging Face to your machine and will spin up a local API to connect and chat with the model.

Thanks for clarifying. Cool project! I’ve been looking for a guilt free LLM that sourced its training data in an ethical way. Tell me if I’m way off base, but I take it your app is to the LLMs similar to how the Ice Cubes is an interface for the fediverse. Nice!

I wish you well with your project. If any of the models you work with fit what I’m looking for, or you know of any such models please let me know!

Yep that’s a pretty good comparison!

I’m curious on what you mean by sourcing training data in an ethical way? I know OpenAI has come under well deserved scrutiny for apparently using content that is hidden behind paywalls without purchasing it themselves in their training data. Which is quite unethical, but aside from that instance I’m interested in hearing some other concerns for my own education.

In general there are definitely loads of models on places like Hugging Face that are fully open source and provide training data sources for many.

I believe for Microsoft’s new Phi 3 models they actually generated synthetic data themselves for training as well which is an interesting approach that seems to yield good results.

In the open source LLM world the new Meta Llama 3 models are the latest and greatest, I haven’t seen any cause for concerns with it yet. Might be worth looking into those!

If you want to make it more unique than ‘just another ChatGPT client’, you could try adding local model support, not sure how difficult that would be.

Local models are indeed already supported! In fact any API (local or otherwise) that uses the OpenAI response format (which is the standard) will work.

So you can use something like LM Studio to host a model locally and connect to it via the local API it spins up.

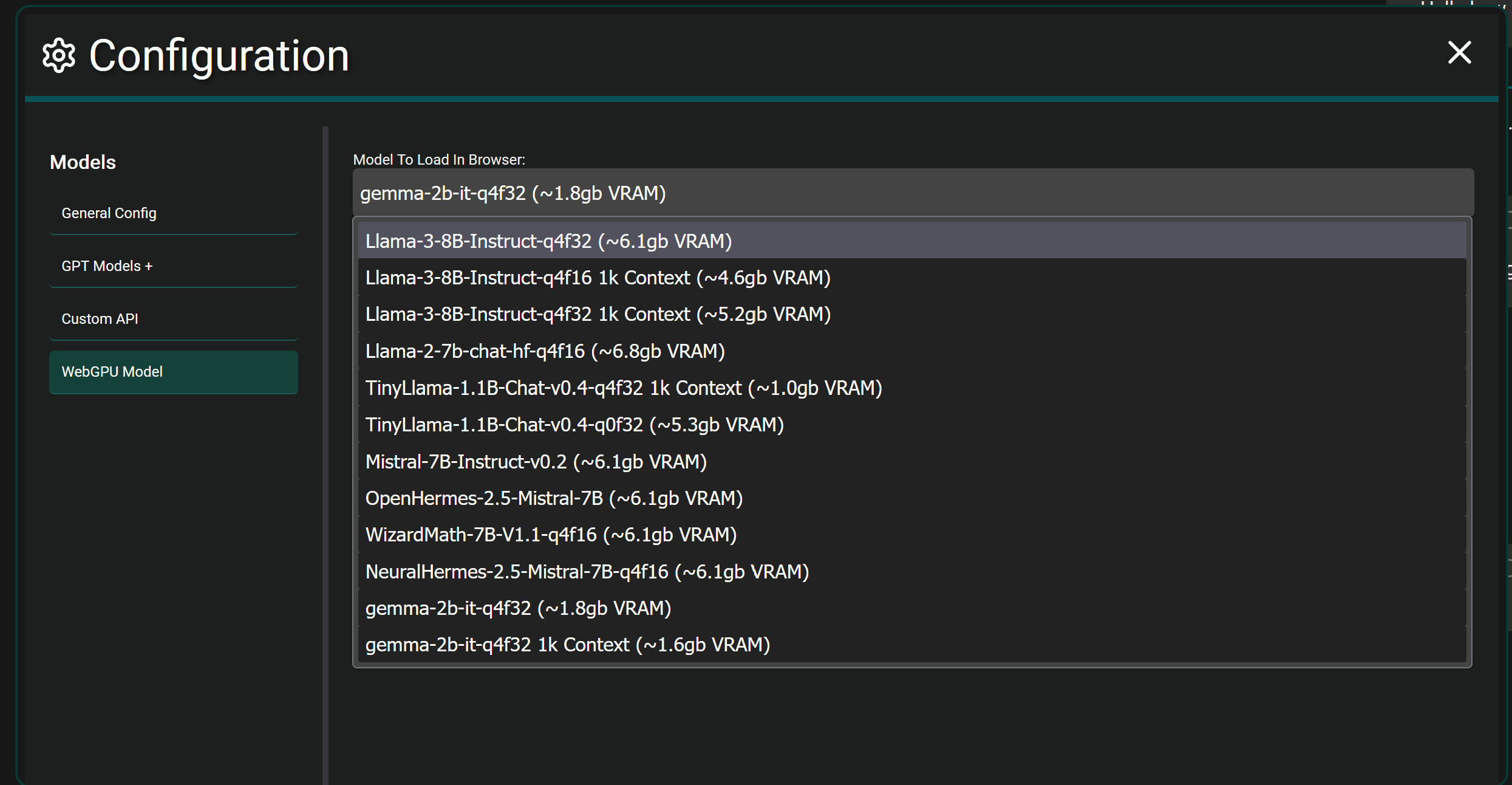

If you want to get crazy…fully local browser models are also supported in Chrome and Edge currently. It will download the selected model fully and load it into the WebGPU of your browser and let you chat. It’s more experimental and takes actual hardware power since you’re fully hosting a model in your browser itself. As seen below.

Cool, thanks!

https://github.com/oobabooga/text-generation-webui/wiki/12-‐-OpenAI-API like this? ;)

Pretty sure this is what you want and why it’s not duplicated effort

I use Jan already, and I like that it’s a native app rather than a webui, I don’t really like webuis. I wasn’t saying there weren’t any local model apps, but that there are far less than glorified ChatGPT clients.

And if they were going to make theirs cross platform, it would in fact be the first FOSS local model app for Android. (Layla Lite exists but is not FOSS).

Does it have tool use? What language is it? Does it use langchain?

This project is entirely web based using Vue 3, it doesn’t use langchain and I haven’t looked into it before honestly but I do see they offer a JS library I could utilize. I’ll definitely be looking into that!

As a result there is no LLM function calling currently and apps like LM Studio don’t support function calling when hosting models locally from what I remember. It’s definitely on my list to add the ability to retrieve outside data like searching the web and generating a response with the results etc…

I wrote this https://github.com/muntedcrocodile/Sydney

Been having decent success with it but gotta implement proper document embedding.