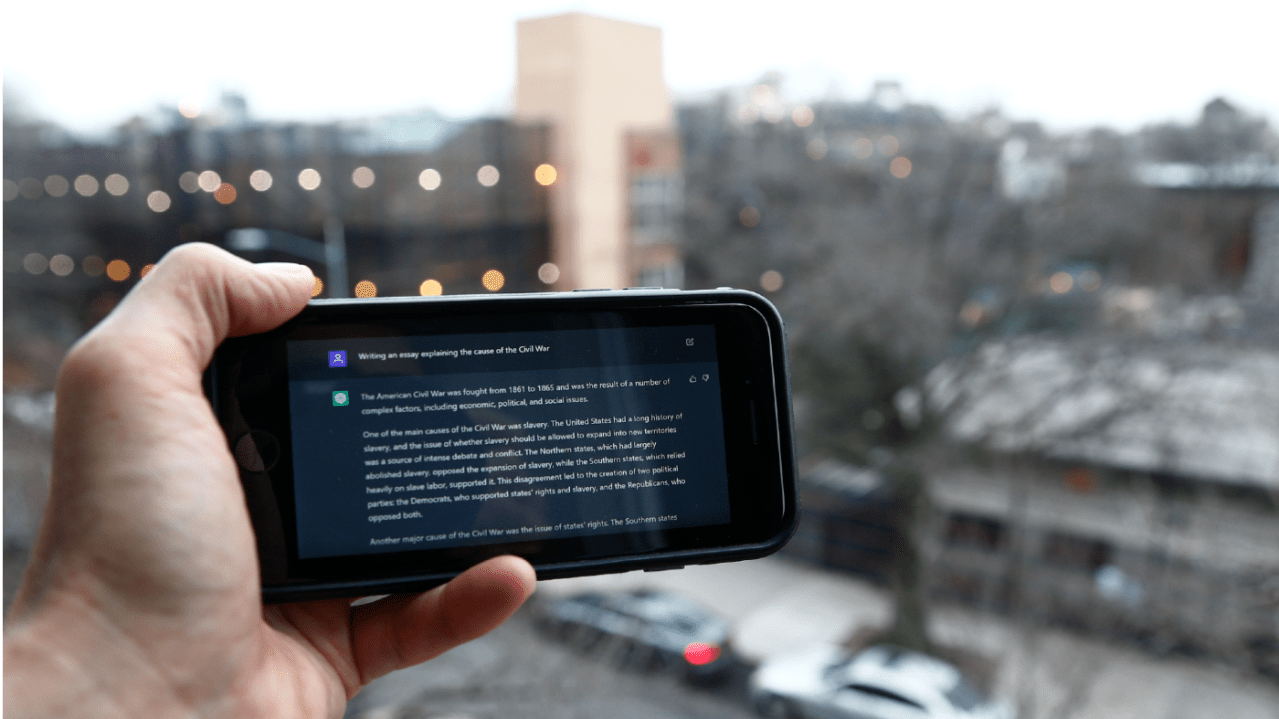

“To prevent disinformation from eroding democratic values worldwide, the U.S. must establish a global watermarking standard for text-based AI-generated content,” writes retired U.S. Army Col. Joe Buccino in an opinion piece for The Hill. While President Biden’s October executive order requires watermarking of AI-derived video and imagery, it offers no watermarking requirement for text-based content. “Text-based AI represents the greatest danger to election misinformation, as it can respond in real-time, creating the illusion of a real-time social media exchange,” writes Buccino. “Chatbots armed with large language models trained with reams of data represent a catastrophic risk to the integrity of elections and democratic norms.”

Joe Buccino is a retired U.S. Army colonel who serves as an A.I. research analyst with the U.S. Department of Defense Defense Innovation Board. He served as U.S. Central Command communications director from 2021 until September 2023. Here’s an excerpt from his report:

Watermarking text-based AI content involves embedding unique, identifiable information – a digital signature documenting the AI model used and the generation date – into the metadata generated text to indicate its artificial origin. Detecting this digital signature requires specialized software, which, when integrated into platforms where AI-generated text is common, enables the automatic identification and flagging of such content. This process gets complicated in instances where AI-generated text is manipulated slightly by the user. For example, a high school student may make minor modifications to a homework essay created through Chat-GPT4. These modifications may drop the digital signature from the document. However, that kind of scenario is not of great concern in the most troubling cases, where chatbots are let loose in massive numbers to accomplish their programmed tasks. Disinformation campaigns require such a large volume of them that it is no longer feasible to modify their output once released.

The U.S. should create a standard digital signature for text, then partner with the EU and China to lead the world in adopting this standard. Once such a global standard is established, the next step will follow – social media platforms adopting the metadata recognition software and publicly flagging AI-generated text. Social media giants are sure to respond to international pressure on this issue. The call for a global watermarking standard must navigate diverse international perspectives and regulatory frameworks. A global standard for watermarking AI-generated text ahead of 2024’s elections is ambitious – an undertaking that encompasses diplomatic and legislative complexities as well as technical challenges. A foundational step would involve the U.S. publicly accepting and advocating for a standard of marking and detection. This must be followed by a global campaign to raise awareness about the implications of AI-generated disinformation, involving educational initiatives and collaborations with the giant tech companies and social media platforms.

In 2024, generative AI and democratic elections are set to collide. Establishing a global watermarking standard for text-based generative AI content represents a commitment to upholding the integrity of democratic institutions. The U.S. has the opportunity to lead this initiative, setting a precedent for responsible AI use worldwide. The successful implementation of such a standard, coupled with the adoption of detection technologies by social media platforms, would represent a significant stride towards preserving the authenticity and trustworthiness of democratic norms.

Exerp credit: https://slashdot.org/story/423285

There are ways to watermark plaintext. But it’s relatively brittle, because it loses signal as the output is further modified, and you also need to know what specific LLM’s watermarks you’re looking for.

So it’s not a great solution on its own, but it could be part of something more comprehensive.

As for non-plaintext file formats…

A simple signature would indeed give us a source but not method, but I think that’s probably 90% of what we care about when it comes to mass disinformation. If an article or an image is signed by Reuters, you can probably trust it. If it’s signed by OpenAI or Stability, you probably can’t. And if it’s not signed at all or signed by some rando, you should remain skeptical.

But there are efforts like C2PA that include a log of how the asset was changed over time, providing a much more detailed explanation of what was done explicitly by humans vs. generative automated tools.

I understand the concern about privacy, but it’s not like you have to use a format that supports proving that an image is legit. But if you want to prove that it is legit, then you have to provide something that grounds it in reality. It doesn’t have to be personally-identifying. It could just be a key baked into your digital camera (assuming that the resulting signature is strong enough that it’s computationally expensive to try to reverse-engineer the key and find who bought the camera).

If you think about it, it’s kind of crazy that we’ve made it this far with a trust model that’s no more sophisticated than “I can tell from the pixels and from seeing quite a few shops in my time”.