Summary

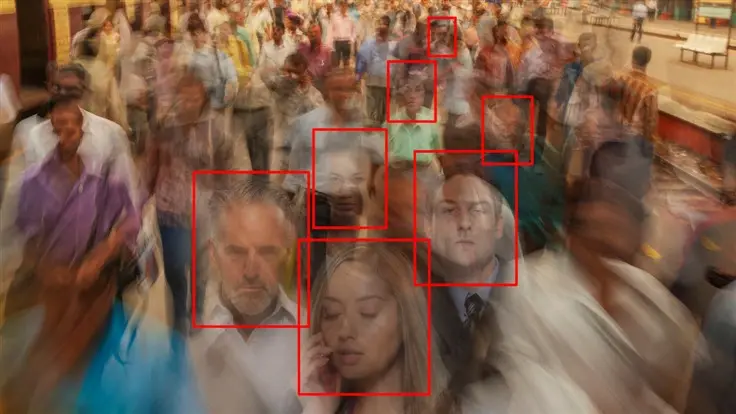

- Detroit woman wrongly arrested for carjacking and robbery due to facial recognition technology error.

- Porsche Woodruff, 8 months pregnant, mistakenly identified as culprit based on outdated 2015 mug shot.

- Surveillance footage did not match the identification, victim wrongly identified Woodruff from lineup based on the 2015 outdated photo.

- Woodruff arrested, detained for 11 hours, charges later dismissed; she files lawsuit against Detroit.

- Facial recognition technology’s flaws in identifying women and people with dark skin highlighted.

- Several US cities banned facial recognition; debate continues due to lobbying and crime concerns.

- Law enforcement prioritized technology’s output over visual evidence, raising questions about its integration.

- ACLU Michigan involved; outcome of lawsuit uncertain, impact on law enforcement’s tech use in question.

Facial recognition (and AI ) is a great tool to use as a double check.

“Hey I think we found her because her car is parked there, is that her?” AI says it’s 90% sure it’s her, sounds good let’s go.

Or entering through an airport gate after scanning your passport. Great double check.

It should never be the first line to find people out of a crowd. That’s how we slide into the dystopia

You’re right. Hopefully they will have rules like this. But knowing police, even if these rules did exist many departments would still go “Facial match? Good enough”