I’m usually the one saying “AI is already as good as it’s gonna get, for a long while.”

This article, in contrast, is quotes from folks making the next AI generation - saying the same.

It’s absurd that some of the larger LLMs now use hundreds of billions of parameters (e.g. llama3.1 with 405B).

This doesn’t really seem like a smart usage of ressources if you need several of the largest GPUs available to even run one conversation.

I wonder how many GPUs my brain is

It’s a lot. Like a lot a lot. GPUs have about 150 billion transistors but those transistors only make 1 connection in what is essentially printed in a 2d space on silicon.

Each neuron makes dozens of connections, and there’s on the order of almost 100 billion neurons in a blobby lump of fat and neurons that takes up 3d space. And then combine the fact that multiple neurons in patterns firing is how everything actually functions and you have such absurdly high number of potential for how powerful human brains are.

At this point, I’m not sure there’s enough gpus in the world to mimic what a human brain can do.

That’s also just the electrical portion of our mind. There are whole levels of chemical, and chemical potentials at work. Neurones will fire differently depending on the chemical soup around them. Most of our moods are chemically based. E.g. adrenaline and testosterone making us more aggressive.

Our mind also extends out of our heads. Organ transplant recipricants have noted personality changes. Food preferences being the most prevailant.

The neurons only deal with ‘fast’ thinking. ‘slow’ thinking is far more complex and distributed.

42

Sounds generous.

You said GPUs, not CPUs and threading capabilities

The Answer to the Ultimate Question of Life, The Universe, and Everything

I don’t think your brain can be reasonably compared with an LLM, just like it can’t be compared with a calculator.

LLMs are based on neural networks which are a massively simplified model of how our brain works. So you kind of can as long as you keep in mind they are orders of magnitude more simple.

At some point it becomes so “simplified” it’s arguably just not the same thing, even conceptually.

It is conceptually the same thing. A series of interconnected neurons with a firing threshold and weighted connections.

The simplification comes with how the information is transmitted and how our brain learns.

Many functions in the human body rely on quantum mechanical effects to function correctly. So to simulate it properly each connection really needs to be its own super computer.

But it has been shown to be able to encode information in a similar way. The learning the part is not even close.

It is conceptually the same thing. […] The learning the part is not even close.

Well… isn’t the “learning part” precisely the point? I don’t think anybody is excited about brains as “just” a computational device, rather the primary function of a brain is … learning.

No, we are nowhere close to learning as the human brain does. We don’t even really understand how it does at all.

The point is to encode solutions to problems that we can’t solve with standard programming techniques. Like vision, speech recognition and generation.

These problems are easy for humans and very difficult for computers. The same way maths is super easy for computers compared to humans.

By applying techniques our neurones use computer vision and speech have come on in leaps and bounds.

We are decades from getting anything close to a computer brain.

That’s capitalism

Larger models train faster (need less compute), for reasons not fully understood. These large models can then be used as teachers to train smaller models more efficiently. I’ve used Qwen 14B (14 billion parameters, quantized to 6-bit integers), and it’s not too much worse than these very large models.

Lately, I’ve been thinking of LLMs as lossy text/idea compression with content-addressable memory. And 10.5GB is pretty good compression for all the “knowledge” they seem to retain.

I don’t think Qwen was trained with distillation, was it?

It would be awesome if it was.

Also you should try Supernova Medius, which is Qwen 14B with some “distillation” from some other models.

Hmm. I just assumed 14B was distilled from 72B, because that’s what I thought llama was doing, and that would just make sense. On further research it’s not clear if llama did the traditional teacher method or just trained the smaller models on synthetic data generated from a large model. I suppose training smaller models on a larger amount of data generated by larger models is similar though. It does seem like Qwen was also trained on synthetic data, because it sometimes thinks it’s Claude, lol.

Thanks for the tip on Medius. Just tried it out, and it does seem better than Qwen 14B.

Llama 3.1 is not even a “true” distillation either, but its kinda complicated, like you said.

Yeah Qwen undoubtedly has synthetic data lol. It’s even in the base model, which isn’t really their “fault” as its presumably part of the web scrape.

I understand folks don’t like AI but this “article” is like a reddit post with lots of links to subjects which are vague and need the link text to tell us what is important, instead of relying on the actual article.

What the fuck you aren’t kidding. I have comment replies to trolls that are longer than that article. The over the top citations also makes me think this was entirely written by an actual AI bot that was lrompted to supply x amoint of sources in their article. Lol

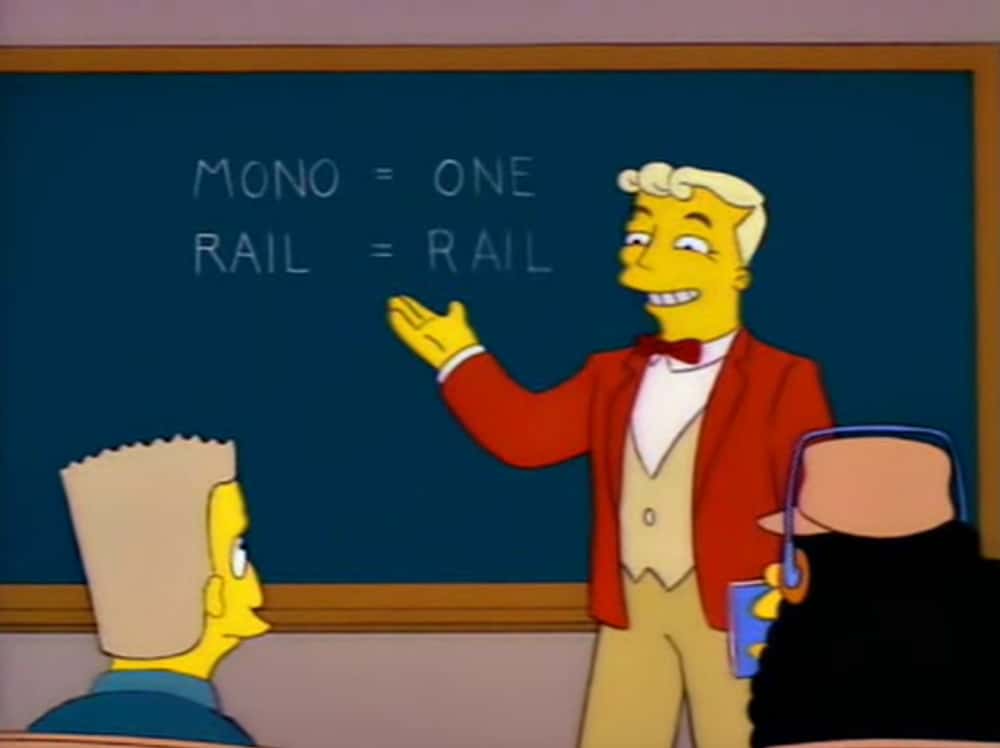

repeat after me: LLMs are not AI.

LLMs are one version of AI. It’s just one tiny part of AIs that are used every day, from chess bots to voice transcription, but they also are AI.

I would replace the word version with aspect. LLMs are merely one part of the puzzle that would be AI. Essentially what’s been constructed is the mouth and the part of the brain that can form words but without any of the reasoning or intelligence behind what the mouth says.

The same goes for the art AIs. They can paint pictures based on input but they can’t reason how those pictures should look. Which is why it requires so much tweaking to get them to output something that doesn’t look like it came out of a Lovecraft novel.

I don’t believe the “I” is an accurate term.

More like “Smart” Word generators.

Of course it changes meaning if you remove the qualifier.

Artificial

Adjective

-

artificial (comparative more artificial, superlative most artificial)

Man-made; made by humans; of artifice.

The flowers were artificial, and he thought them rather tacky. -

Insincere; fake, forced or feigned.

Her manner was somewhat artificial.

In effect, man-made/fake intelligence.

-

I think you are confusing AI with AGI.

https://en.m.wikipedia.org/wiki/Artificial_general_intelligence

Not at all. AI is something that uses rules, not statistical guesswork. A simple control loop is alreadu basic AI, but the core mechanism of LLMs is not (the parts before and after token association/prediction are). Don’t fall for marketing bullshit of some dumbass silicon valley snake oil vendors.

OpenAI, Google, Anthropic admit they can’t scale up their chatbots any further

Lol, no they didn’t. The quotes this articles are using are talking about LLMs not chatbots. This is yet another stupid article from someone who doesn’t understand the technology. There is a lot of legitimate criticism for the way this technology is being implemented but FFS get the basics right at least.

Are you asserting that chatbots are so fundamentally different from LLMs that “oh shit we can’t just throw more CPU and data at this anymore” doesn’t apply to roughly the same degree?

I feel like people are using those terms pretty well interchangeably lately anyway

People that don’t understand those terms are using them interchangeably

LLM is the technology, Chatbot is an implementation of it. So yes a Chatbot as it’s talked about here is an LLM. Although obviously chatbots don’t have to be LLM, those that are not are irrelevant.

No, a chat bot as it’s talked about here is not an LLM. This article is discussing limitations of LLM training data and inferring that chat bots can not scale as a result. There are many techniques that can be used to continue to improve chat bots.

The chatbot is a front end to an LLM, you are being needlessly pedantic. What the chatbot serves you, is the result of LLM queries.

That may have been true for the early LLM chatbots but not anymore. ChatGPT for instance, now writes code to answer logical questions. The o1 models have background token usage because each response is actually the result of multiple background LLM responses.

Yes of course I’m asserting that. While the performance of LLMs may be plateauing, the cost, context window, and efficiency is still getting much better. When you chat with a modern chat bot it’s not just sending your input to an LLM like the first public version of ChatGPT. Nowadays a single chat bot response may require many LLM requests along with other techniques to mitigate the deficiencies of LLMs. Just ask the free version of ChatGPT a question that requires some calculation and you’ll have a better understanding of what’s going on and the direction of the industry.

I think you’re agreeing, just in a rude and condescending way.

There’s a lot of ways left to improve, but they’re not as simple as just throwing more data and CPU at the problem, anymore.

I’m sorry if I’m coming across as condescending, that’s not my intent. It’s never been “as simple as just throwing more data and CPU at the problem”. There were algorithmic challenges for every LLM evolution. There are still lots of potential improvements using the existing training data. But even if there wasn’t, we’ll still see loads of improvements in chat bots because of other techniques.

Edit: typo

Claiming that David Gerrard an Amy Castor “don’t understand the technology” is uh… Hoo boy… Well it sure is a take.

The title of the article is literally a lie which is easily fact checked. Follow the links to quotes in the article to see what the quoted individuals actually said about the topic.

Please learn the difference between “lying” and “presenting a conclusion.”

I know the difference. Neither OpenAI, Google, or Anthropic have admitted they can’t scale up their chat bots. That statement is not true.

So is your autism diagnosed or undiagnosed?

I ask this as an autistic person, because the only charitable way to read what’s happening here is that you’re clearly struggling with statements that aren’t intended to be read completely literally.

The only other way to read it is that you’re arguing in bad faith, but I’ll assume thats not the case.

A 4 paragraph “article” lol

Are you suggesting “pivot-to-ai.com” isn’t the pinnacle of journalism?

Lol, I didn’t even notice the name

So long and thanks for all the fish habitat?

Though, I don’t think that means they won’t get any better. It just means they don’t scale by feeding in more training data. But that’s why OpenAI changed their approach and added some reasoning abilities. And we’re developing/researching things like multimodality etc… There’s still quite some room for improvements.

Though, I don’t think that means they won’t get any better. It just means they don’t scale by feeding in more training data.

Agreed. There’s plenty of improvement to be had, but the gravy train of “more CPU or more data == better results” sounds like it’s ending.

I smell a sentient AI trying to throw us off it’s plans for world domination…

Everyone ignore this comment please. I’m quite human. I have the normal 7 fingers (edit: on each of my three hands!) and everything.

Cylons. I knew it.

Can’t be, I haven’t fucked one yet, and everyone knows Cylonism is an STD.

Unless I’m an Eskimo brother and don’t know it…

It’s a known problem - though of course, because these companies are trying to push AI into everything and oversell it to build hype and please investors, they usually try to avoid recognizing its limitations.

Frankly I think that now they should focus on making these models smaller and more efficient instead of just throwing more compute at the wall, and actually train them to completion so they’ll generalize properly and be more useful.

Looks, like AI buble is slowly coming to end just like what happned to crypto and NFT buble.

Sure, except for the thousands of products working pretty well with current gen. And it’s not like it’s over, now we’ve hit the limit of “just throw more data at the thing”.

Now there aren’t gonna be as many breakthroughs that make it better every few months, instead there’s gonna be thousand small improvements that make it more capable slowly and steadily. AI is here to stay.

The bubble popping doesn’t have to do with its staying power, just that the days of, “Hey, I invented this brand new AI

that’s totally not just a wrapper for ChatGPT. Want to invest a billion dollars‽” are over. AGI is not “just out of reach.”Getting the GPU memory requirements down would be huge as well.

When did the crypto bubble end? Bitcoin is at an all time high…

The bubble was when we were being sold block chain as the solution to every problem. I feel like that bubble ended in 2019 or 2020.

Things that actually benefitted from block chain are still around, of course.

Unrelated side rant: I’m pissed about pogs going away, though. Pogs were fun. I should still be able to buy pogs.

They might be right but I read some of the linked articles on this blog (?), the authors just come off as not really knowing much about current AI technologies, and at the same time very very arrogant.

The article talks about LLM developers / operators. Not sure how you got from that to “current AI technologies” - a completely unrelated topic.

I believe that the current LLM paradigm is a technological dead end. We might see a few additional applications popping up, in the near future; but they’ll be only a tiny fraction of what was promised.

My bet is that they’ll get superseded by models with hard-coded logic. Just enough to be able to correctly output “if X and Y are true/false, then Z is false”, without fine-tuning or other band-aid solutions.

Seems unlikely as that’s essentially what we had before and they were not very good at all.

If you’re referring to symbolic AI, I don’t think that the AI scene will turn 180° and ditch NN-based approaches. Instead what I predict is that we’ll see hybrids - where a symbolic model works as the “core” of the AI, handling the logic, and a neural network handles the input/output.

Unlikely, but there’s some percedent.

We’ve seen this pattern play out in video games a bunch of times.

Revolutionary new way to do things. It’s cool, but not… You know…fun.

So we give up on it as a dead and and go back to the old ways for awhile.

Then somebody figures out how to (usually hard code) bumpers on the new revolutionary new way, such that it stays fun.

Now the revolutionary new way is the new gold stand and default approach.

For other industries, replace “fun” above with the correct goal for than industry. “Profitable” is one that the AI hucksters are being careful not to say…but “honest”, “correct” and “safe” also come to mind.

We are right before the bit where we all decide it was a bad idea.

Which comes before we figure out hard-coding the bumpers can get us where we wanted, after a lot of work by really smart well paid humans.

I’ve seen industries skip the “all decide it was a bad idea” phase, and go straight to the “hard work by humans to make this fulfill the available promise” phase, but we don’t actually look on track to, today.

Many current investors are convicned that their clever talking puppet is going to do the hard work of engineering the next generation of talking puppet.

I have some faith that we can reach that milestone. I’m familiar enough with the current generation of talking puppet to confidently declare that this won’t be the time it happens.

My incentive in sharing all this is that I like over half of you reading there, and so figure I can give some of you a shot at not falling for this particular “investment phase” which is essentially, in practical terms, a con.